How to translate technical manuals without losing precision

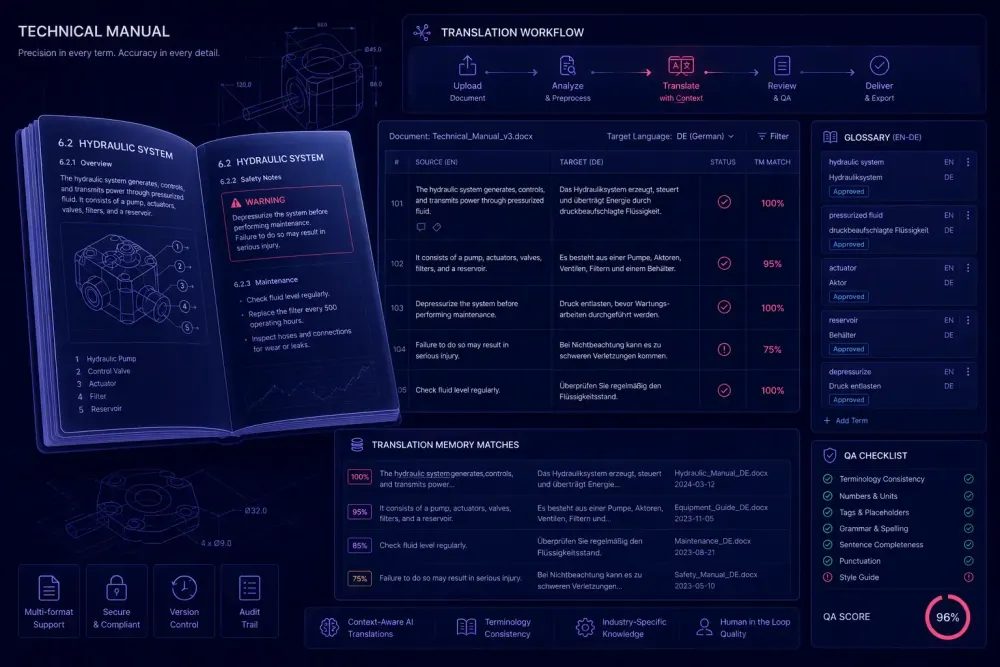

Technical manual translation requires more than language skill. Learn how to structure glossaries, AI workflows, and QA for precision at scale.

Technical manual translation sits at the intersection of language and engineering. The cost of a mistranslated torque value or an ambiguous safety warning isn't a revision cycle — it's a product recall, a safety incident, or a failed regulatory submission. We've worked with agencies handling industrial, aerospace, and medical equipment documentation long enough to see where technical manual translation consistently fails: not because translators lack skill, but because the workflow wasn't designed for the content type. This guide covers the decisions and processes that produce consistent, precise results, from glossary preparation to QA for safety-critical segments.

What makes technical manual translation different from other content types

Technical manuals — maintenance guides, installation instructions, operation manuals, safety data sheets — share a set of characteristics that set them apart from marketing copy or legal contracts.

Terminology density is high and tightly controlled. A single service manual for a CNC machine might introduce 200–400 product-specific terms, each with exactly one correct translation per target language. There's no room for synonyms: if the source says "spindle clamp," the translation doesn't get to say "spindle bracket" in one paragraph and "spindle holder" in the next. Both may be understood by a bilingual engineer. Neither is acceptable if the client's approved glossary says otherwise.

Procedural content follows strict sequential logic. Step 7 in an assembly procedure often refers to a component labeled in step 3. If any segment shifts meaning, downstream steps break. Errors in procedural text compound in ways they don't in general prose.

Technical manuals also carry embedded numbers, measurement units, tolerances, and part numbers that must survive translation intact. A torque value of 22 Nm doesn't become 22 lb·ft without explicit client instruction on unit conventions. Translators working without clear guidance on this point will make judgment calls — and they'll make different ones across different pages.

Finally, many technical manuals are modular: shared warning blocks, repeated procedure steps, and standard header sections appear across entire product families, updated annually. Translation memory reuse is real and measurable in these workflows — but only if the project is structured to take advantage of it. A manual that gets translated as a flat file with no TM reference throws away that potential entirely.

This doesn't apply if you're translating a general product overview brochure for the same product. Those have different requirements: tone, cultural fit, persuasion. Technical manuals require precision above everything else.

Start with a domain glossary, not the document

The most common mistake we see in technical manual translation projects is opening the source file before building the glossary. By the time a translator reaches page 40, they've made a dozen term decisions that are now embedded in the TM — and half of them conflict with what a subject matter expert would have approved on day one.

The right sequence: extract candidate terms from the source document first. Run term extraction using a tool like SDL MultiTerm Extract or memoQ's term extraction module, or start with a frequency analysis if you don't have access to specialized software. Then take that candidate list to someone with subject domain knowledge — an engineer, a product specialist, or the client's technical team — and get each entry approved with its preferred target-language equivalent.

In practice: when we reviewed translation workflows for an industrial pump manufacturer's service manuals across five European languages, the glossary review step reduced post-editing time by roughly 30–40% compared to projects where glossaries were built reactively, after the first translated draft. Terms like "impeller," "stuffing box," and "mechanical seal" had client-preferred translations that differed from what general CAT tool suggestions would have produced. Without the glossary, post-editors spent that time making decisions rather than reviewing decisions.

A second example: in aerospace component maintenance manuals, some markets require terminology drawn from applicable maintenance standards — ATA iSpec 2200 conventions, for instance. A glossary built without checking those standards produces translations that read fluently but fail compliance review. That's a very expensive way to find out the glossary was wrong.

Once the glossary is approved, load it into your CAT tool and enforce it as a required reference throughout the project. For a more detailed look at structuring and maintaining client-facing glossaries across multiple projects and translators, see our terminology management guide.

Structuring the technical manual translation workflow

With the glossary approved, you can build the actual translation workflow. For a 50,000-word operation manual, here's how we'd approach it.

Start with a repetition analysis before quoting or scheduling. Technical manuals with modular structure — shared warning blocks, repeated procedure steps, standard headers — often show 25–40% exact or fuzzy TM match rates on a project with an established TM. If this is a first translation with no existing memory, you're starting from zero, but every confirmed segment becomes reusable on the next revision cycle. That payoff is worth building the TM correctly from the start.

Not all segments need the same attention. Sort by risk before assigning work:

Safety warnings and cautions require a mandatory critical review pass. Specification and tolerance tables need numeric verification against the source. Step-by-step procedures need a logic check to confirm that sequential references remain intact. Headers and field labels need strict terminology compliance against the approved glossary. Repeated content, once confirmed and propagated, needs a spot-check to verify that propagation applied correctly throughout the file.

Translator assignment matters more in technical content than in almost any other document type. A translator with domain experience in industrial machinery is not interchangeable with one whose background is general technical or marketing translation, even if the language combination matches. This is worth stating explicitly in the project brief and in onboarding documentation for new translators.

Version management: when the client delivers an updated manual, run a file diff against the previous source version before beginning work. Re-translating confirmed segments wastes time and introduces inconsistency that the TM should have prevented. Most CAT tools handle this through a project update workflow, but it needs to be triggered deliberately.

Where AI fits in technical manual translation

AI translation can produce strong results in technical manual translation — but the conditions matter.

It works best when source text follows controlled language principles: short sentences, active voice, explicit component references, no ambiguous pronoun antecedents. Structured, repetitive content — safety warning blocks, parts lists, field labels, numbered procedure steps — is where AI-generated first drafts tend to be most reliable. The consistency of controlled technical writing gives the model less room to introduce unwanted variability.

Where extra caution applies: safety-critical warnings, measurement unit specifications, chemical compound names, and any text embedded in illustrations or callout boxes. These segments warrant mandatory human review regardless of what the AI confidence score says. A mistranslated pressure unit or a garbled chemical formula doesn't present as obviously wrong in the target language — it reads fluently while conveying the wrong value.

The translation prompt matters as much as the model. A prompt that specifies the subject domain, the intended reader (field service technician vs. end user), the required register, and any known conventions for the target market will produce better output than a generic instruction. Treat prompt preparation the same way you'd treat glossary preparation: it needs domain input before it can return domain-accurate output.

This doesn't apply if the source document has writing quality problems — passive constructions stacked across multiple clauses, ambiguous pronoun references, sentences that span four lines. AI translation amplifies source quality issues rather than correcting them. A controlled language edit pass on the source is worth the investment on high-stakes manuals where mistranslation carries real liability.

For teams working with AI translation tools that accept DOCX input, SnapIntel provides a structured workflow that lets you load an approved glossary and configure a domain-specific translation prompt before the job runs — then review a QA report on the output. That's useful when you need segment-level visibility rather than a single file download with no audit trail.

QA for technical documents: what to check beyond fluency

QA for technical manual translation goes considerably further than standard language fluency checks. A proper QA pass should cover several layers that most general translation review workflows don't address by default.

Numerical accuracy. Every number in the translated document should match the source exactly. This sounds obvious, but number errors in technical content — AI-translated or human-translated — are more common than you'd expect. Run a numeric verification check; most CAT tools support this through configurable QA profiles. Don't rely on visual review to catch these.

Unit consistency. Decide before translation starts whether units will be preserved as-is or converted, and apply that decision uniformly throughout the file. A manual that uses both "mm" and "millimeters" without a clear rule, or mixes metric and imperial inconsistently across chapters, fails basic QA standards even if every word is linguistically correct.

Terminology compliance. Cross-check translated segments against the approved glossary. On projects where multiple translators handled different chapters, terminology drift is common — the same source term gets translated three different ways depending on who worked on which section. A QA report that flags glossary violations per segment makes this review tractable rather than a manual hunt across hundreds of pages. Our translation QA guide covers a full framework for structuring this kind of check.

Tag and formatting integrity. Technical manuals carry embedded tags for variable content, figure references, cross-references, and index entries. Broken or missing tags in the translated file cause downstream problems in the client's publishing system — InDesign, FrameMaker, DITA, or whatever they use for output. CAT tool QA profiles should include tag verification by default; if yours doesn't, set it up before the first technical manual project goes through.

Safety language. Any segment marked as a warning, caution, or note should get a second human review pass regardless of TM match rate or AI confidence score. This is non-negotiable on manuals with regulatory exposure — the translation of a safety instruction is not a place to rely on automation alone.

Post-editing technical translation efficiently

Post-editing technical content is not the same as reviewing creative writing. The question isn't "does this sound natural?" — it's "is this correct?"

A practical approach: categorize segments before starting the review pass. High-risk segments — safety warnings, numeric specifications, numbered procedures — get full review against the source. Confirmed TM matches with 100% exact status from a verified human-built TM can often be spot-checked rather than read line by line, especially in mature projects with a clean TM history. AI-translated segments get a structured review with the approved glossary open alongside.

Efficiency drops when post-editors have to correct problems that should have been prevented during preparation. If the AI model produced an unapproved term because no glossary was loaded, the post-editor has to determine the correct term — which requires domain knowledge and adds time that wasn't budgeted. Good preparation absorbs that cost upfront, where it's cheaper.

One practical triage method we've seen work well: use the QA report output to sort the post-editing queue. Segments that passed all automated checks get lighter attention. Segments flagged for glossary violations, number mismatches, or missing tags get immediate focus. This is consistently more efficient than reading every segment at the same review intensity, particularly on manuals over 30,000 words.

Where to start

If you're setting up a technical manual translation project today, the sequence is: extract candidate terms from the source, get client approval on target-language equivalents, load the approved glossary into your CAT tool, run AI or human translation with a domain-specific prompt or brief, route the output to a post-editor with subject-matter knowledge, then run QA covering numeric accuracy, tag integrity, and terminology compliance before delivery.

Every step in that sequence can be partially automated. The manual steps are not the bottleneck. The bottleneck is almost always skipping glossary review to save two days at the start, then spending a week in post-editing fixing the consequences — consequences that were predictable before the file was ever opened.