The rise of the AI-augmented translator: a new kind of professional

The AI-augmented translator isn't a new job title — it's a shifted skill set. Here's what the role looks like and how to position yourself in it.

The phrase "AI-augmented translator" has been circulating in industry conversations for a few years, but in 2026 it describes a daily reality rather than a forecast. The professionals we talk to regularly are no longer spending most of their working hours generating translations from scratch. They're reviewing AI output, correcting errors that require cultural or domain knowledge, preparing glossaries and prompts before jobs start, and making judgment calls that automated systems still get wrong. The role has shifted — in scope, in economics, and in the skills that actually differentiate professionals. Understanding what that shift means in concrete terms is more useful than debating whether AI will eventually eliminate the need for human linguists altogether.

What the AI-augmented translator actually does

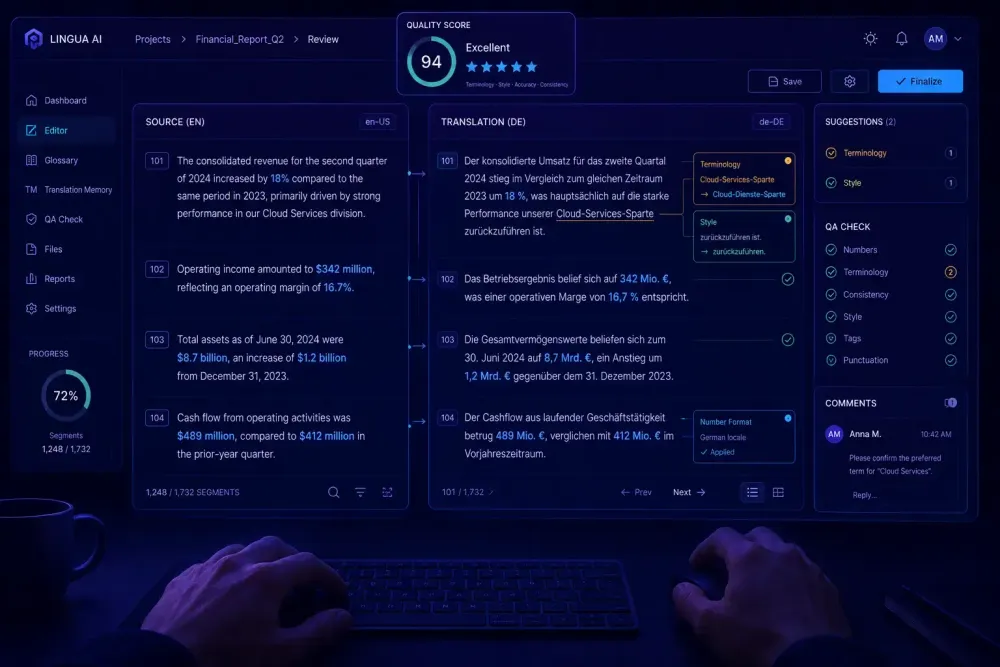

Strip away the terminology and the picture is fairly simple. An AI-augmented translator runs AI pre-translation on their documents and then works through the output rather than starting from an empty target field. The primary contribution has moved from generation to evaluation: catching errors, fixing terminology, restoring nuance that the model flattened, and deciding which segments the AI got right.

In practice, this often looks like the following. A freelancer receives a 5,000-word document from a pharmaceutical client. Instead of translating segment by segment in their CAT tool, they run AI pre-translation with a domain glossary loaded, then work through the output in post-editing mode — correcting regulatory terminology, fixing sentence structures the model made grammatically correct but contextually wrong, and flagging places where the source text itself was ambiguous. The same job might take four hours instead of eight, depending on how well the glossary was prepared and how consistent the source text is.

This isn't a fundamentally new concept. Machine translation post-editing (MTPE) has existed since the statistical MT era. What has changed is the quality floor. Neural MT and large language model output is, on average, more fluent and less obviously wrong than what post-editors were working with a decade ago. That changes the character of the work — less wholesale rewriting, more targeted correction requiring precise judgment. Based on what we hear from agency contacts and from translators in our network, a growing share of professional volume is now processed through some form of AI pre-translation before human review, with that share increasing across most major language pairs and content types.

The defining characteristic of the AI-augmented translator isn't which tools they use. It's that they've rebuilt their process around AI output rather than treating it as an optional convenience feature layered on top of a traditional workflow.

The skills that matter more now

When AI handles the first pass, the distribution of professional skill shifts noticeably.

Terminology judgment becomes more important, not less. AI models regularly introduce plausible-looking substitutions that are technically wrong for a given client, domain, or regulatory context. A translator reviewing a medical device manual needs to catch when the model has used a synonym that reads naturally but doesn't match the controlled vocabulary required for regulatory submission. That catch requires domain knowledge the model doesn't have. The fluency of the output actually makes this harder, not easier — a confidently wrong translation is less obviously wrong than a broken one.

Glossary and prompt preparation has become a real professional skill. Before a translation job starts, an experienced AI-augmented translator sets up approved term pairs, writes domain-specific instructions for the model, and often tests output on a short sample to identify systematic error patterns early. This work is invisible in the final product but directly determines how much correction effort the post-editing phase will require.

Critical reading speed matters more than it used to. Reviewing 3,000 words of AI output efficiently means reading in a different mode — faster, more selective, focused on the specific error types a model tends to produce for a given language pair and subject area. A legal translator we work with described it as reading for wrongness rather than reading for correctness. Once you learn which errors the AI makes consistently, you can move through clean sections quickly and slow down where genuine judgment is actually needed.

Raw typing speed and segment-level fluency, by contrast, are less central than they were. The translator is no longer competing on words generated per hour. They're competing on accurate judgment per hour — which is a different capability and requires different deliberate practice to develop.

Post-editing as a professional discipline

MTPE has been part of the translation industry for years, but its status has changed considerably. For much of the 2010s, post-editing was treated as lower-tier work — often priced below full human translation and associated with statistical MT output that regularly required near-complete rewriting. That framing made sense for the quality of source material being processed at the time.

The current situation is different. With better AI output, the post-editing task at the upper end of the market sits closer to review and refinement than to rewriting. The cognitive demand is often comparable to full human translation even when the time investment is lower. This has pushed the industry to reconsider how MTPE is priced and how it's positioned professionally.

We've spoken with translators in legal and financial domains who have adopted MTPE not because clients demanded it, but because it allows them to handle more volume per day while maintaining the quality standards their clients expect. One legal translator described her current model: she prices MTPE projects at roughly 70% of her standard per-word rate, handles approximately twice the daily word count, and focuses her expertise on the regulatory and contract phrasing sections where AI output is consistently unreliable. The work is different from what she did five years ago, but it still draws on the same domain knowledge and the same precision of judgment. The ratio of generation to evaluation has just flipped.

This works best when the domain is well-defined, the client has a usable glossary, and the source text is clean. It doesn't apply if the source is poorly written, if the domain is so narrow that AI training data is thin, or if a single error in the output carries serious regulatory or legal consequences.

Where human judgment still carries the weight

There are categories of work where AI pre-translation adds limited benefit, and being clear about them is more honest than overstating what the augmented model can do.

Highly specialized regulatory content — FDA submissions, patent claims, legal contracts with jurisdiction-specific drafting conventions — often requires a level of precision that current AI output doesn't deliver reliably. The error rate may be low in absolute terms, but when any single error carries regulatory or legal consequences, the review burden can approach the effort of translating from scratch. Post-editing such content sometimes takes longer than it saves.

Creative and adaptive translation, including marketing copy and transcreation work, still depends on cultural judgment and intentional voice that AI translation tends to flatten. The difference between a translated tagline and a localized one that actually works in the target market is still a human decision in most cases, and the AI's confident fluency can obscure just how flat the result is until someone with real cultural knowledge reads it.

Source text quality is a limiting factor that doesn't get discussed enough. When the source document is poorly written or ambiguous, AI translation doesn't compensate for that — it carries the problems into the target language in ways that are often harder to untangle than the original ambiguity. Translators working from poor source texts find post-editing yields little time advantage and sometimes creates extra work.

These aren't arguments against the AI-augmented model. They define the conditions under which it applies — and knowing those conditions precisely separates a translator who uses the model well from one who applies it without judgment.

The economics: what's getting better and what isn't

The economic picture for AI-augmented translators is genuinely better in some scenarios and harder in others, and it's worth being direct about both.

In volume-heavy domains where AI output quality is consistent — IT localization, standard product documentation, corporate communications in major language pairs — throughput has increased substantially. Translators who have adapted their workflows can handle more words per day. If they price strategically, they can maintain or improve their effective hourly rate even as per-word rates face downward pressure from clients who are increasingly aware that raw AI generation is cheap.

That downward pressure on per-word rates is real. As pre-translation pipelines become more standard across agencies, clients understand that the generation step now costs little. The professional justification for translator rates has to shift from production volume toward review accuracy and error-catching expertise. That's a harder argument to make in a client brief, but it's the accurate one, and translators who communicate it clearly are better positioned than those who compete on price alone.

Specialization has become a clearer economic differentiator than it was before. A translator with deep expertise in pharmaceutical regulatory submissions can justify higher post-editing rates than a generalist because the errors that matter in that domain require specific knowledge to catch. Two people reviewing the same AI output with different domain backgrounds will produce meaningfully different results. That gap is the professional's competitive position — and it's one that AI doesn't erode the way it erodes generic high-volume production work.

For more context on how AI tools are reshaping day-to-day workflows across the industry, the overview of AI translation tools in 2026 covers how practitioners across different workflow types are adapting their approaches.

How agencies are restructuring their translator relationships

From the agency side, the shift to AI-augmented workflows has changed what a productive translator relationship looks like. Agencies running pre-translation pipelines at scale need translators who can work comfortably in post-editing mode and assess AI output quickly and accurately. That's a different skill profile than what was prioritized in a fully manual workflow.

We've seen some agencies begin separating their rosters more explicitly: translators who primarily handle MTPE tasks and those reserved for projects where AI pre-translation is inappropriate or insufficient. The second group — handling high-stakes regulatory, legal, or creative work — tends to command higher rates, and the distinction between the two is becoming more explicit in project briefs.

There's also growing attention to glossary governance on the agency side. When pre-translation is running at scale, the quality of the shared glossary directly determines how much post-editing each translator has to do. Agencies that maintain clean, domain-specific glossaries see measurable reductions in post-editing time per project. Translators who understand how glossary quality affects AI output — and who can give useful feedback on it — are more useful partners than those who simply correct whatever they find in the output without feeding anything back.

This creates an interesting dynamic: the most valuable translators in an AI-augmented agency workflow are often the ones who operate slightly above the translation task itself. They're thinking about why the AI made certain errors and what would need to change upstream to prevent them. That's a different orientation than what the job required before, and it's not a natural shift for everyone.

Getting started: how to assess your own workflow honestly

If you haven't yet restructured your workflow around AI pre-translation, the most practical starting point isn't choosing a tool — it's figuring out which parts of your current work are likely to benefit and which aren't.

Take a recent project representative of your usual workload and break it into rough categories: terminology-dense specialized content, standard structural content with recurring patterns and formulaic sections, and creative or adaptive content where your professional voice is doing the most work. AI pre-translation returns the most consistent benefit on the middle category. The first category benefits significantly from strong glossary preparation before the model runs. The third may not benefit much at all, and forcing it into an MTPE workflow can produce worse results than starting from scratch.

From there, run a small test before committing to a full workflow change. Take a short document from your standard-content category, run it through AI pre-translation with a domain-appropriate glossary, and track your post-editing time against your usual full-translation pace for comparable material. That gives you actual data for your language pair, domain, and typical source text quality rather than relying on general claims about productivity gains.

Pay attention to where you stop and think during the post-editing pass. If you're stopping frequently to reconstruct meaning from a confused translation, the AI output isn't good enough for the domain to make post-editing efficient. If you're mostly making terminology corrections and light fluency edits, the model is doing useful work.

The translators who have adapted most successfully to this model are not necessarily those who adopted AI tools first. They're the ones who were clearest about where their professional expertise makes a real difference — and who built their AI-augmented workflow around preserving that expertise rather than simply automating the generation step and calling it done.