Terminology consistency in translation: why it matters and how to achieve it

Terminology consistency in translation doesn't happen by accident. Learn how to build glossaries, structure term bases, and enforce consistency across projects and translators.

Terminology consistency in translation sounds like an obvious goal, but it's one that agencies and freelancers fail to achieve far more often than they'd admit. Not because they don't care about it—but because the systems that produce consistent terminology require deliberate setup and maintenance, and most teams are moving too fast to build them properly. We've seen projects where the same legal term was rendered four different ways across a 50,000-word commercial contract because four translators were working in parallel from four different understandings of what the client expected. The client caught it in review. That kind of error doesn't come from poor translation skill. It comes from missing infrastructure.

What poor terminology consistency in translation actually costs

The cost of inconsistent terminology shows up in three places: client revisions, post-editing time, and quality perception.

Client revisions caused by terminology are among the most expensive to correct. They often require going back through a completed document to find every instance of a term and standardizing it. If a 20,000-word technical manual uses three different translations for one product component, each instance requires a decision about which version to adopt, then tracking down all the others. This is the kind of rework that makes agencies defensive about revision scope in their client contracts—and rightly so, because the cost is real.

On the post-editing side, inconsistent terminology shows up as repeated corrections. A post-editor who fixes the same mistranslated term 25 times across a document is not working efficiently, and this kind of rework is frequently invisible in project tracking: it gets absorbed into general editing time without anyone realizing the root cause. When we look at post-editor correction logs on projects that lacked a pre-approved glossary, terminology fixes often account for 40–60% of total correction volume.

Quality perception is harder to measure but equally real. A client reading a document where the same product is called by three different names notices it—even if they can't articulate why the document feels unprofessional. Legal and technical clients often have their own internal terminology standards and will catch deviations immediately, sometimes flagging them as accuracy errors rather than style preferences.

Research from CSA Research on terminology management programs has shown that agencies with formalized glossary workflows see meaningful reductions in rework across technical and legal translation projects, where term density is high. The investment in a proper term base pays back faster in those domains than in general content translation.

That said, this doesn't apply uniformly. For short-form content with low term density—a social media caption, a brief marketing email—an elaborate terminology management system is overkill. The cost-benefit calculation shifts significantly with document length and technical complexity.

Term base vs. glossary: what you're actually building

These terms are often used interchangeably in practice, but the distinction matters when you're deciding what to build.

A glossary is typically a flat list: source term, target term, sometimes a definition or usage note. It answers one question: what is the approved translation of this term? Glossaries are easy to create, easy to share, and sufficient for most projects. A client-specific glossary maintained in a spreadsheet or inside a CAT tool provides the majority of the benefit for the majority of translation work.

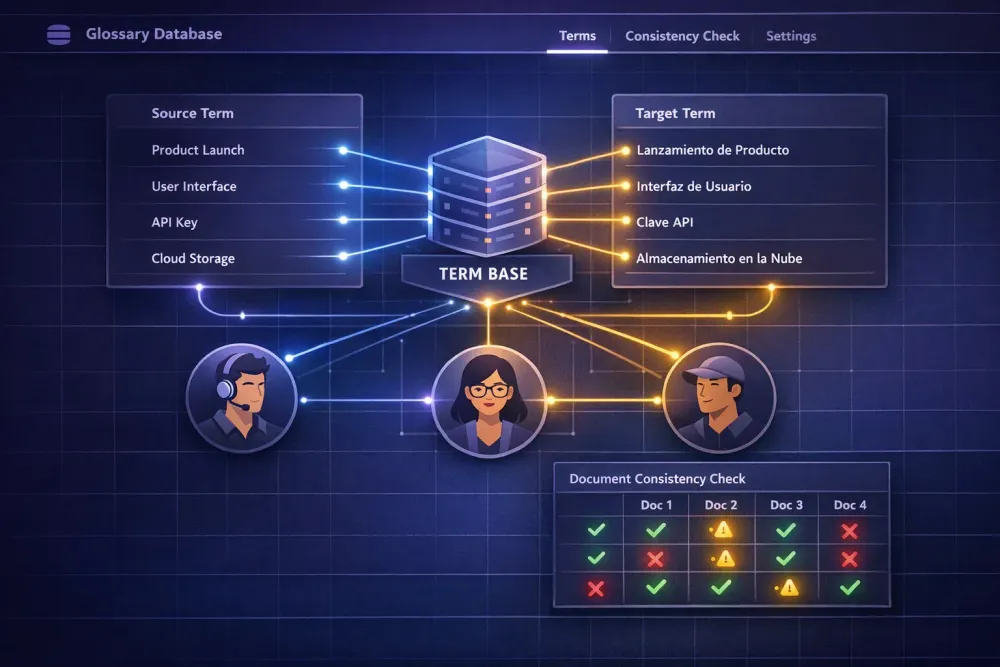

A termbase (often formatted to the TBX standard) is a structured database that stores additional attributes alongside each entry: domain, subject field, usage context, definition, source, client, project, date of approval, status (preferred, deprecated, forbidden). A termbase answers: what is the approved translation of this term, why, for which client, in which context, and is it still current?

The additional structure matters when you have many terms across multiple clients with overlapping but distinct terminology preferences, or when terms have changed over time and outdated versions need to be flagged as deprecated rather than simply deleted.

Most translation agencies don't need a full termbase for most of their work. A well-maintained client glossary—whether in a spreadsheet, or in the built-in glossary feature of a CAT tool that surfaces term suggestions during translation—handles the practical case. The termbase structure becomes worthwhile when the number of managed terms per client is in the hundreds, or when multiple translators working on the same account need to search and filter by attributes like usage context or approval status.

The failure mode to avoid is building something more sophisticated than your team will actually maintain. A simple glossary updated after every project is more useful than a well-designed termbase that gets abandoned after three months because the maintenance burden is too high.

Building a glossary that translators will consult

A glossary that exists but isn't consulted during translation doesn't solve the consistency problem. The goal is a glossary that translators access because it saves them time and uncertainty—not because they're required to.

The starting point is populating the glossary before translation begins, not during review of the first draft. This requires either extracting terms from the client's source materials before the project starts, or building the glossary on the first project and ensuring it's attached from the second project onward. Starting with no glossary on project one and building it during review is acceptable—but only if the review outputs actually get into the glossary before project two starts.

For content: focus on terms that represent translation decisions rather than translation facts. Common nouns with obvious equivalent translations don't need entries. What needs entries: product and feature names, defined terms in legal documents, domain-specific vocabulary where multiple valid translations exist, terms the client has previously corrected in a delivered translation, and terms that must not be translated at all.

Definition and context fields are optional but useful for terms where the correct translation varies by context. A product name might stay in source language in UI strings but be translated in user documentation for a different market. That context information belongs in the glossary alongside the term and its approved translation.

Updating is as important as initial population. A glossary that doesn't reflect client feedback and terminology changes becomes a liability—translators following an outdated entry will produce output the client has already rejected. We treat glossary updates as part of the project close process: before archiving a project, add or revise any terms where client revisions revealed a preference. This takes 10–15 minutes on most projects and prevents the same correction from occurring on the next one.

Our guide on translation terminology management covers the full lifecycle of term management in more detail, including how to structure glossaries for multi-translator projects where coordination risk is higher.

Getting consistent results across multiple translators

Terminology consistency gets harder as project teams grow. Two translators working independently on different sections of the same document can each produce locally consistent work that is inconsistent with each other—and both are technically correct, which makes the problem harder to explain in review.

The mechanisms that prevent this are straightforward, if not always implemented: a shared, accessible glossary that all translators on the project use before work starts; a reconciliation step before final delivery that checks for term consistency across sections or files; and a clear project brief instruction about where the glossary is and what it represents.

CAT tools make this substantially easier. When translators work in an environment where the glossary automatically surfaces approved terms as they type, consistency improves without additional coordination effort. The glossary is attached to the project in Smartcat, for instance, and visible to every translator working on it—rather than being a separate document that may or may not get opened. This is one of the practical reasons agencies running multi-translator projects inside CAT platforms see better terminology consistency than those coordinating via email and shared spreadsheets.

For terminology not covered by the glossary, the project brief should specify a decision rule: what the translator should do when they encounter a term with no approved translation. The options are to use a natural-language equivalent and mark it for review, to leave it in source language with a flag, or to contact the project manager. The specific answer matters less than the consistency—all translators on the same project should handle unrecognized terms the same way, otherwise different sections of the document will have different approaches to the same problem.

When a project involves multiple target language pairs—English into French, German, and Spanish simultaneously—terminology alignment across those language pairs becomes a separate coordination challenge. Some clients have a preferred term in each target language that needs to be stored separately per pair. Others don't care about cross-language alignment at all. Clarifying this at project intake prevents the painful experience of trying to reconcile it at delivery.

How CAT tools and AI translation affect terminology consistency

CAT tools have been the primary mechanism for enforcing terminology consistency for decades. Translation memory surfaces previous translations of matching segments, and glossary integration suggests approved terms at the segment level. Both reduce cognitive load on the translator and make consistent decisions easier to apply at volume.

AI translation changes this picture in ways that require explicit attention.

AI translation engines can be given glossary terms as part of the prompt or context, and they will generally try to apply them—but with less reliability than a hard-coded CAT tool lookup that forces the approved term. The model may apply the glossary term correctly in 90% of segments and substitute a near-synonym in the remaining 10%, producing a failure mode that looks like human inconsistency but has a different cause.

This means AI translation workflows need an explicit terminology QA step that CAT-only workflows handle somewhat automatically. Checking that approved terms appear in their correct form across a translated document should be on the review checklist, not an assumption.

Some teams address this programmatically—running a search of the translated output for source terms and verifying that approved translations appear consistently—before human post-editing begins. This catches systematic errors quickly and lets post-editors focus on cases where the correct term appeared inconsistently, rather than scanning the entire document for term problems.

One area where AI translation can actually improve terminology consistency compared to large parallel human teams: when a single AI run processes an entire document, the same context shapes every segment, which can produce more consistent style and phrasing choices than five translators working in parallel with different personal preferences. The terminology still needs to be explicitly pinned in the prompt or context—but there are fewer independent decision-makers introducing variation at the segment level.

If you work with Smartcat bilingual DOCX files and want to systematically build glossary and prompt context before AI translation runs, SnapIntel integrates terminology preparation directly into the project workflow—glossary generation and prompt configuration happen before translation starts, rather than after terminology problems appear in the output.

Measuring terminology consistency and improving from what you find

Terminology consistency is easier to improve when you measure it, which requires isolating terminology errors from other error types in your review process.

One practical approach: define a terminology-specific QA pass in your review workflow. After translation and post-editing, check the translated output against the approved glossary. For each glossary term that appears in the source, verify that the corresponding approved translation appears consistently throughout the output. This takes longer than a general quality review but catches a specific and correctable class of problems.

When you find inconsistencies, the response depends on cause. If different translators handled the term differently, add a usage note to the glossary that clarifies the context rules. If an AI translation engine consistently chose a near-synonym over the approved term, strengthen the glossary instruction in the prompt or add an explicit negative rule ("do not use [SYNONYM]—always use [APPROVED TERM]"). If the client revised the term in review, update the glossary before the next project.

Tracking terminology errors by project over time gives you signal on which client glossaries need more work, which language pairs produce more terminology variation, and whether new translators onboarded to an account are applying the glossary correctly. This kind of tracking doesn't require sophisticated tooling—a note in a project log and a periodic review of post-editor correction categories is sufficient to spot patterns over a few months.

For a detailed view of how terminology consistency fits into broader quality review frameworks, our guide on measuring translation quality covers the metrics and error typologies agencies use to track it systematically.

The practical starting point

The single most actionable step for any translation team that doesn't have a formal glossary workflow: create a client glossary for your three most active accounts. Even a spreadsheet with 20–30 terms per client, linked in the project brief and shared with translators before work starts, will produce measurable consistency improvements on the next project.

Start there. Add terms after each project based on client feedback and post-editor corrections. Review the glossary when clients send revision requests. Complexity can come later—the glossary comes first, and it pays back faster than almost any other quality investment in the workflow.