How to detect AI hallucinations in translated documents before deliver

AI hallucinations in translation appear confident and fluent, making them hard to catch. A practical detection protocol for translation teams before delivery.

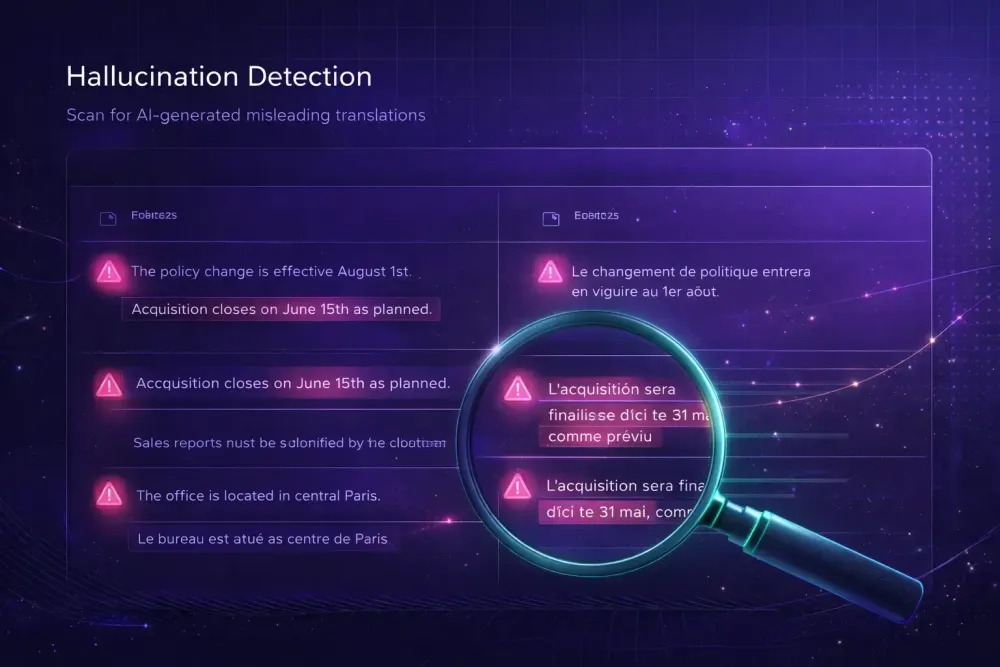

AI hallucinations in translation are more common than most agencies expect, and they rarely look wrong. When an AI engine generates fluent text that doesn't accurately reflect the source, fabricating a product specification, misrepresenting a contractual clause, or swapping a compound name for a plausible-sounding alternative, nothing in the output signals the problem. The segment reads naturally. Post-editors move on. The error ships. Catching ai hallucinations in translation before delivery requires a specific kind of structured attention, and in our experience with teams running bilingual DOCX files through AI pipelines, it is the one QA step that gets the least process design.

What AI hallucinations look like in a translated document

The core failure mode is this: the model generates text that is linguistically correct but semantically wrong relative to the source. It is a confident wrong answer, not a broken one.

Content fabrication is the most damaging variant. The AI replaces or invents information based on pattern rather than the actual source segment. In a pharmaceutical document we reviewed, the active ingredient concentration changed from 5 mg/mL to 50 mg/mL in translation. The surrounding text was accurate; the numeric transposition was the only error, and it passed a fluency review without any flag. In a contract translation for a technology client, a limitation-of-liability clause that read "shall not exceed the purchase price" came back as "shall not apply to consequential damages." That is a legally significant reversal with no basis in the source.

A related failure is omission with fill-in. The model drops part of a source segment and generates bridging text that fits the document template but does not reflect the specific variant being described. This happens often in instruction manuals and terms-and-conditions documents where structure repeats and the model learns the pattern at the cost of reading the actual instance in front of it.

A third variant is source bleed: vocabulary or framing from earlier in the document contaminating later segments. In batch translation workflows with long context windows, the model can pull terminology from a different section or domain context, producing output that is internally consistent with what it saw earlier but inconsistent with the current source segment.

What makes all of these harder to catch than ordinary translation errors is that they do not feel wrong. A human error often creates a register mismatch or structural awkwardness that post-editors notice instinctively. An AI hallucination reads fluently and confidently, which is exactly the problem.

Why AI hallucinations in translation concentrate in certain document types

Not all documents carry equal hallucination risk. The failure mode clusters around specific characteristics, and knowing where it concentrates lets you design a proportionate review.

Technical documents with dense domain terminology are high-risk because the model has strong training priors that can override the source. Legal, medical, financial, and engineering content all fall here. The model knows what sentences in these genres typically look like, and it sometimes completes them based on that expectation rather than the actual source text. A medical protocol specifying a dosage range, a contract defining a specific indemnification scope, or an engineering spec giving a tolerance in metric units are all susceptible to confident, fluent, incorrect rendering.

Numeric-heavy documents represent a second risk category. Dates, quantities, tolerances, reference codes, percentages, and currency values are all susceptible to quiet modification. The surrounding text may be accurate while only the number changes, which makes numeric errors invisible to fluency-based review.

Repetitive document structures create a third risk pattern. Regulatory filings, product specification sheets, and terms-and-conditions documents all share a template-like structure. The model learns the template and generates the expected completion, which is often close to but not identical to the source variant for that particular item.

This does not mean AI translation is unsuitable for these document types. It means the review protocol needs to be designed around where the failures actually live.

Numeric and named entity checks: where to start

If you have limited time for hallucination detection, start with numbers and proper nouns. These categories are the most verifiable and carry the highest downstream risk when wrong.

A numeric check is straightforward: extract all numeric values from the source and confirm their presence and accuracy in the target. For shorter documents this can be done manually in a two-column spreadsheet; for longer documents, a basic script that parses segments by numeric token handles it in minutes. Dates, measurements, percentages, reference numbers, and currency values should match exactly unless the target language uses different formatting conventions, in which case the conversion should be confirmed rather than assumed.

For named entities, the check covers product names, company names, regulatory body names, geographic terms, and personal names. In one legal translation we reviewed, a party's name was rendered in three different romanizations across the same document because the model processed each occurrence independently without consistency enforcement. Automated QA tools do not typically flag this.

The third check is glossary verification: for every term in your approved glossary, confirm that its target-language equivalent appears in its approved form in the translation. Smartcat's built-in QA module flags glossary violations during translation, which is one reason the glossary should be populated before the AI run. But the glossary only catches what is in it; segments outside glossary coverage are where fabrication risk is highest.

Together, these three checks take fifteen to thirty minutes for a typical 5,000-word document and catch the majority of high-severity hallucinations without requiring a full segment-level review.

Terminology drift versus fabrication: knowing which problem you are solving

It helps to separate two related problems that often get grouped under the hallucination label.

Terminology drift is when the AI uses a valid but inconsistent target-language term for the same source term: "agreement" in one segment, "contract" in another, "arrangement" in a third. The individual translations are individually defensible. The problem is inconsistency across the document. Drift is a glossary problem. It is caught by glossary verification and TM-based review, and it is addressed in preparation rather than in post-delivery correction.

Fabrication is when the target output has no plausible semantic correspondence to the source. The model inserted something that is not in the source, or it replaced source content with something invented. This is harder to catch systematically because you are looking for an absence: a correspondence that should exist but does not.

The practical way to separate them in a review workflow: after the glossary and numeric check, do a spot-check of segments that fall outside your glossary coverage. These are the segments where the model had the most latitude. You do not need to review every segment, only the ones where uncontrolled model behavior was most likely.

For documents above 10,000 words, spot-check at least 10 percent of segments outside glossary coverage, with a full read of any segment touching definitions, quantities, exclusions, warranties, or regulatory references. For high-stakes document categories such as legal or pharmaceutical content, that threshold should be higher.

When automated QA catches hallucinations and when it does not

Automated QA tools are genuinely useful for a real subset of hallucination types. They reliably catch glossary violations, missing placeholders, numeric mismatches where formatting rules are defined, tag errors, and whitespace issues. Running automated QA before human review is the right sequence: it removes mechanical errors before a human spends time on them.

What automated QA does not catch is semantic drift, where the model generated text that is fluent and grammatically correct but means something different from the source. Detecting that requires a reviewer who can read both source and target and compare meaning, not form.

A QA report with no flags tells you that the structure is intact, glossary terms appear, and defined numeric rules were satisfied. It says nothing about whether a segment was fabricated, softened, or transposed while remaining structurally valid. This is a structural limitation of automated QA, not a flaw in any particular tool.

Smartcat's Translation Quality Score (TQS) evaluates AI output quality per segment and routes below-threshold segments toward human review rather than auto-confirming them. That is a useful quality gate, but a high TQS score still does not guarantee semantic accuracy against the source. The score reflects quality relative to what the model knows, not fidelity relative to the actual source document.

For legal, medical, or regulatory documents, build human verification into the workflow for semantic categories where automated tools are silent.

Building a practical hallucination detection protocol

A systematic protocol does not mean reviewing every segment. It means reviewing the right segments in a defined order.

Start with automated QA. Resolve all flags related to glossary terms, numeric content, and tags. This baseline should be clean before human review starts.

Then run targeted manual checks: extract numeric values from the source and confirm the targets, five to ten minutes for a 5,000-word document; scan for proper nouns and confirm consistency across the document; review 10 percent of segments outside glossary coverage, sampled at random or weighted toward sections containing definitions, quantities, or exclusion language.

For the highest-risk document categories, add a semantic review pass where a post-editor reads source and target together for the opening and closing sections of the document, plus any section with definitional, quantitative, or exclusion content.

Keep a log of what you find across projects. Hallucination patterns repeat by document type, language pair, and subject domain. An internal record of where errors concentrated in past projects is one of the most practical QA assets an agency can maintain, and it lets you calibrate review intensity per project type rather than applying the same protocol to everything.

If you run AI translation on Smartcat bilingual DOCX files and want QA artifacts that give reviewers a structured starting point, SnapIntel produces QA reports alongside translated outputs rather than requiring teams to audit from scratch.

The most reliable way to reduce hallucinations before they happen

Detection matters, but the most effective step comes before translation runs.

An approved glossary does not eliminate hallucinations. It narrows the space in which the model can be wrong. If the model has an approved term mapping, it is less likely to generate a plausible but incorrect alternative. The same applies to domain context: a model told it is translating a clinical trial protocol for the German pharmaceutical market behaves differently from one running without that instruction.

Most resources on hallucination detection focus on what to do after translation. In our experience, the most time-efficient intervention is preparation: a structured glossary build, a domain prompt review, and a clean source document before the AI ever runs.

Our overview of translation terminology management covers the glossary-build process in more detail, including approaches for extracting terminology from client materials when no existing termbase is available.

The detection protocol in this guide handles what preparation does not catch. Both matter.