AI-assisted translation vs. machine translation: what's the real difference?

AI-assisted translation and machine translation sound alike but work very differently. Here's what actually separates them for professional translators.

If you ask five people in the translation industry what "AI-assisted translation" means, you'll get five different answers — and at least two of them will be describing machine translation under a different label. That's not just a terminology problem. It leads to real mismatches between what agencies promise clients, what freelancers expect from a workflow, and what the tools actually deliver. We've spent a lot of time watching how these two approaches get conflated, and the confusion tends to cost people something: time, money, or client trust.

The distinction between ai-assisted translation and machine translation (MT) isn't academic. It affects which problems you can solve, how much human involvement a workflow actually requires, and where quality breaks down.

What machine translation actually does

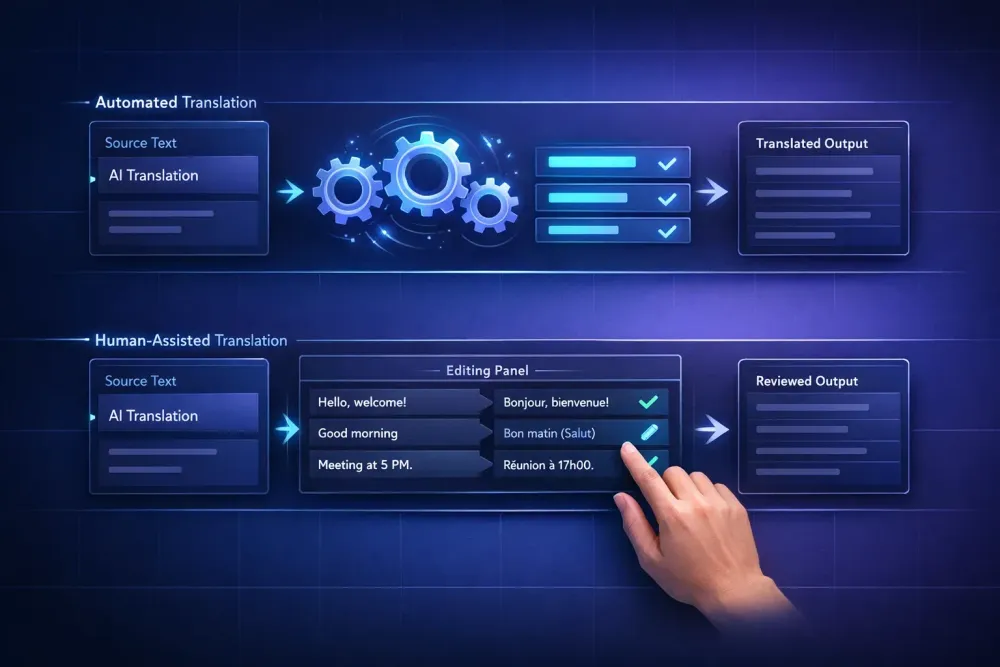

Machine translation is fully automated text conversion. You put source text in, the system returns target text, and no human is involved during that process. Neural machine translation (NMT) — the dominant approach since the mid-2010s, used by DeepL, Google Translate, and the translation engines inside most CAT tools — works by training on massive parallel corpora and learning to predict likely target-language equivalents for source-language input.

The quality of MT output has improved significantly over the last decade. For language pairs with large training datasets — English to French, English to Spanish, English to German — NMT produces fluent output that handles common sentence structures well. For lower-resource language pairs, or for highly technical domains where training data is sparse and specialized, the results vary more.

What MT doesn't do is understand context beyond the sentence level, recognize domain-specific conventions that aren't well-represented in training data, or catch its own errors. When it goes wrong, it often goes wrong confidently: grammatically fluent output that happens to mean something different from the source, or terminology that's technically accurate in one domain but completely wrong in another. A neural system trained on general web data will handle "compound" differently in a chemistry context versus a mortgage document. That ambiguity shows up in output without any signal that something is off.

MT is well-suited to high-volume, lower-complexity content where speed matters more than precision — e-commerce product descriptions, support ticket responses, internal communications, first drafts of repetitive technical text. This works best when the content is formulaic enough that the engine's training data covers the patterns well. It doesn't apply cleanly when content is specialized, high-stakes, or requires cultural adaptation.

What AI-assisted translation adds to the picture

AI-assisted translation keeps the human translator in the workflow but changes what that work looks like. Instead of producing translation from scratch, the translator works with AI-generated content: reviewing it, editing where needed, confirming what's correct, and applying judgment to the cases the system handled poorly.

The working environment is usually a CAT tool editor where source and target text appear side by side. The AI generates a first draft per segment — either from a translation memory match, a machine translation engine, or both — and the translator moves through those drafts rather than writing cold. Glossary suggestions appear when recognized terms are detected. QA checks flag potential issues before the file leaves review.

Post-editing, formally known as MTPE (machine translation post-editing), is the most common form of this. Light post-editing means making minimal changes to reach a publishable standard. Full post-editing means revising to the quality level of a human-translated text, which can involve significant rework when the MT output is weak. A CAT editor like Smartcat's shows TM matches alongside AI output per segment, and quality scores indicate which segments are likely fine versus which need close attention.

The practical difference from pure MT: the human translator's expertise is still load-bearing. They catch the errors invisible to automated systems — terminology errors in regulated domains, register mismatches that make a legal clause read like a marketing brochure, culturally tone-deaf phrasing in content meant to resonate locally. The AI speeds up the volume; the translator is responsible for the quality.

Where the line between them gets genuinely blurry

There's real overlap at the workflow level, which is part of why people conflate them. Most professional translation workflows today involve AI at some stage, even when the output is called "human translation." The translator using a CAT tool with AI pre-translation enabled is technically using MT — the question is what happens after the system runs.

Some vendors market MT-based workflows as "AI-assisted" because a human reviews the output at the end, even if that review is cursory. Some describe a workflow as "fully automated" when a post-editor has quietly cleaned up 20% of segments before delivery. These distinctions matter when you're comparing service costs, making SLA promises, or explaining to a client what they're actually paying for.

The most honest framing: machine translation and AI-assisted translation exist on a spectrum where the variable is how much human expertise is in the loop and at what point. Pure MT on one end, full human translation with AI only for glossary suggestions on the other, and post-editing at various quality levels in between. Knowing where on that spectrum a given workflow sits is what lets you match it to the right content type and client expectation.

The MTPE productivity question nobody likes to answer honestly

One thing that surprises people who haven't run the numbers: post-editing doesn't always produce the productivity gains it promises. For well-performing language pairs and formulaic content, a translator reviewing MT output can finish significantly faster than translating from scratch. We've seen estimates of 30–50% speed improvements in technical content with strong TM coverage.

For specialized content — legal, medical, pharma — the picture is messier. When MT output is inconsistent or domain-specific terminology is wrong, the post-editor spends time not just editing but verifying: checking whether the machine's terminology choice is correct against a reliable reference, not just whether the sentence reads well. That verification overhead can reduce or eliminate the speed advantage. CSA Research has noted that in regulated domains, MTPE can actually take longer than fresh translation when MT quality is poor, because the post-editor has to work against the priming effect of seeing plausible-looking wrong output.

This doesn't mean MTPE doesn't work in those domains — it means it has to be set up properly. A good glossary built before the MT job runs, domain-specific translation memory applied at the TM lookup stage, and a QA process that flags terminology errors automatically all change what the post-editor is looking at when they open the file.

What this means for pricing and client conversations

Rate structures for MT, MTPE, and AI-assisted translation haven't standardized across the industry, which creates room for confusion on both sides of the client-agency relationship. Per-word MTPE rates are generally lower than per-word human translation rates — appropriately so when the content type and quality expectations are calibrated accordingly. But "AI-assisted" as a label without further definition tells a client almost nothing about what they're getting.

Agencies that navigate this well tend to be explicit: this tier uses AI pre-translation reviewed by a human post-editor at a light editing standard, priced at X; this tier is full human translation with AI used only for TM and glossary lookup, priced at Y. The distinction gives clients a real choice rather than forcing them to guess whether "AI-assisted" means "touched by a human" or "fully automated with a human label on it."

For content going to regulated industries, into public legal documents, or carrying compliance obligations, erring toward the more thorough human review tier isn't just ethically cleaner — it's risk management.

Where to put the line in practice

There's no universal answer to when AI-assisted translation is sufficient and when it isn't. Some patterns we've seen hold up across different agency contexts:

MT without post-editing works when the content is low-stakes and short-lived, speed matters more than precision, the language pair is well-supported, and errors are cheap to fix or will be caught downstream. Think internal drafts, rough research summaries, or short-lived promotional copy in markets where a client has explicitly accepted the tradeoff.

AI-assisted translation with post-editing works when content needs to meet professional quality standards, some domain-specific terminology is required, and volume makes human-only translation impractical or cost-prohibitive. Most commercial technical translation falls here.

Human translation with AI as a support tool — for TM, glossary, QA flagging — works when the content requires cultural adaptation, carries legal or compliance weight, or represents a brand voice where language quality materially affects the outcome. High-stakes marketing, legal documents, regulated industry submissions belong here, or at the upper end of full post-editing with experienced domain specialists.

Knowing which category a project falls into before starting, and being clear with clients about which service model their content gets, is the most concrete step toward getting this right. The tools to support any of these workflows exist. The question is using them in a way that matches what the content actually needs.